Choosing the wrong document automation tool costs you twice. Once when you pay for it, and again when your team quietly goes back to copying deal data into Google Docs by hand.

I've watched this happen more times than I'd like. Someone books a demo, the PDF looks great, the team signs up, and three months later reps have abandoned it because line item mapping was clunky, approvals still lived in Slack, and the CRM didn't know a document had been sent.

This post is my working checklist for evaluating document automation as a HubSpot partner. I'll link to the solution areas I map most often, including quotes, proposals, and contracts, because your evaluation should follow the documents that actually close revenue.

If you want a tools-level view of the market, read best document automation tools for HubSpot in 2026 alongside this. And if you want integration specifics, start with our HubSpot integration overview so you know what deep sync looks like when it's done well.

Why this evaluation goes wrong so often

Document automation sits between CRM data, brand templates, legal language, and customer experience. Buyers tend to optimise for the prettiest PDF or the fastest first demo. That's understandable, but it's also how you end up with a tool reps abandon because mapping line items took four clicks per row.

Before you shortlist vendors, separate three layers: data fidelity from HubSpot into the document, governance for who can send what, and lifecycle signals that return to HubSpot after send and signature. If you only evaluate the first layer, you'll buy something that creates pretty files and weak reporting.

Another common mistake is letting IT own the decision alone, or letting sales own it alone. IT cares about SSO and audit logs, sales cares about speed, and legal cares about version control. You need all three perspectives in the same scorecard, or you'll optimise for one group and surprise the others at go-live.

The buyer checklist I use on every call

I keep a literal checklist. You can copy it into Notion and score each vendor from one to three, or use it as a script during a live build session.

- HubSpot object fit. Does the product create or update records you can filter, report on, and include in workflows? Or is the document trapped in a separate silo?

- Field mapping. Can you map standard properties, custom properties, calculated fields, and associations without opening a ticket for every tweak?

- Line items. For quotes and proposals, do tables handle discounts, bundles, and rounding the way finance expects?

- Template ownership. Who updates language when pricing changes, and how long does that take in real life?

- Approval rules. Can you route exceptions through approval workflows without turning every deal into a committee?

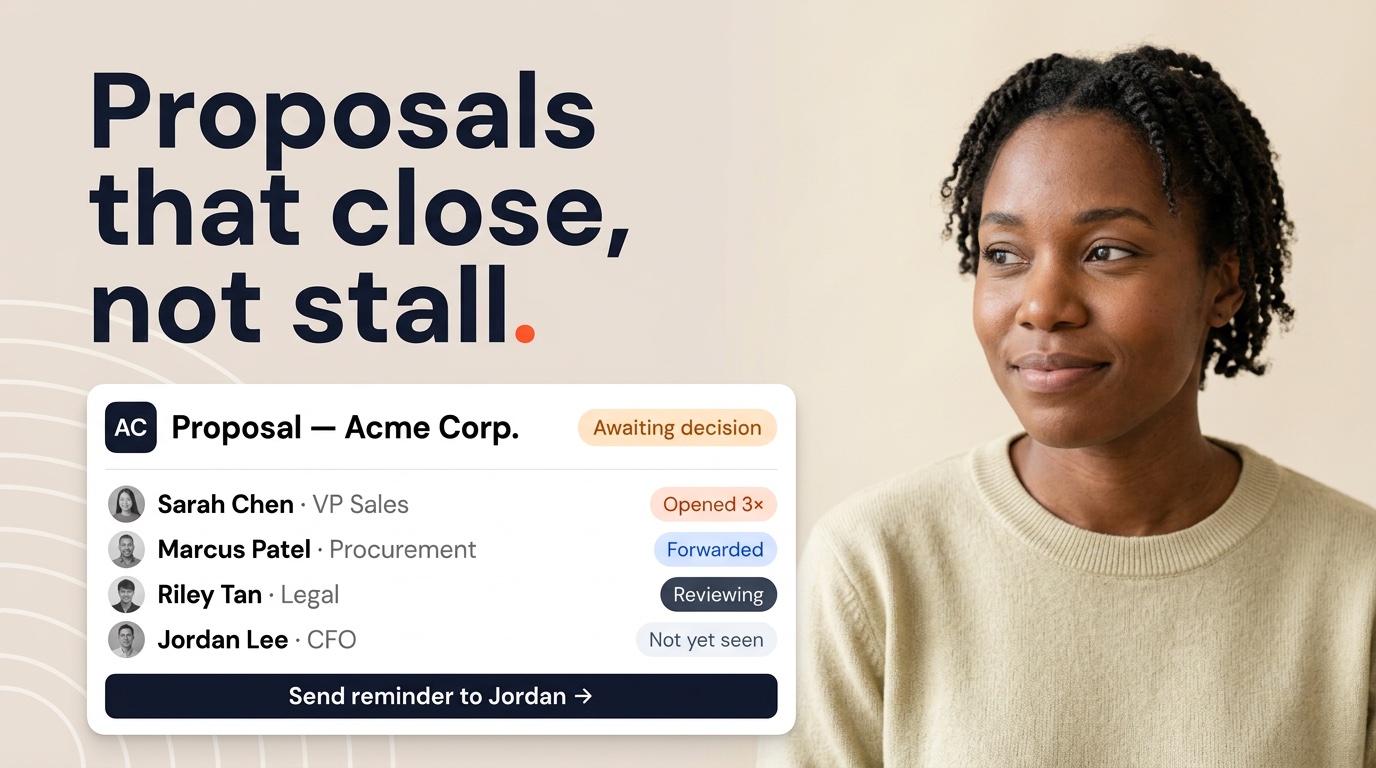

- eSign events. Do sent, viewed, partially signed, and completed states sync back to HubSpot in properties your team already uses?

- Audit trail. Can you answer who approved, who sent, and which version the customer saw?

- Reporting. Can leadership see stalled documents without a weekly CSV export?

I treat any "we can probably do that" answer as a yellow flag until I see it on a real deal record. Use your sandbox for this. Pick an ugly deal with messy line items, because that deal is more honest than a polished sample company.

Tip: Score vendors on the messiest template first. If the hard template works, the easy ones are rarely a problem.

Security and compliance without the buzzwords

Security reviews can turn into PDF exchanges that teach you nothing. I focus on a smaller set of questions that map to how sales documents actually move.

Ask where customer and deal data lives when a document generates. Ask whether tokens resolve server-side in a way that stops reps from accidentally leaking fields into the wrong template. Ask how long files persist, who can download them, and whether links expire. If your company cares about data residency, ask about subprocessors and regions too.

For access control, I want to know how admin roles differ from sender roles, and whether you can offboard a rep without hunting down orphaned links. For signatures, I want clarity on identity options and what your legal team considers acceptable for your contract types.

If your security team uses a standard questionnaire, send it early. The worst time to learn a vendor can't meet a hard requirement is the week before rollout. I also compare answers to what your HubSpot portal already allows. The document tool shouldn't be the weakest link in a chain you've already trusted.

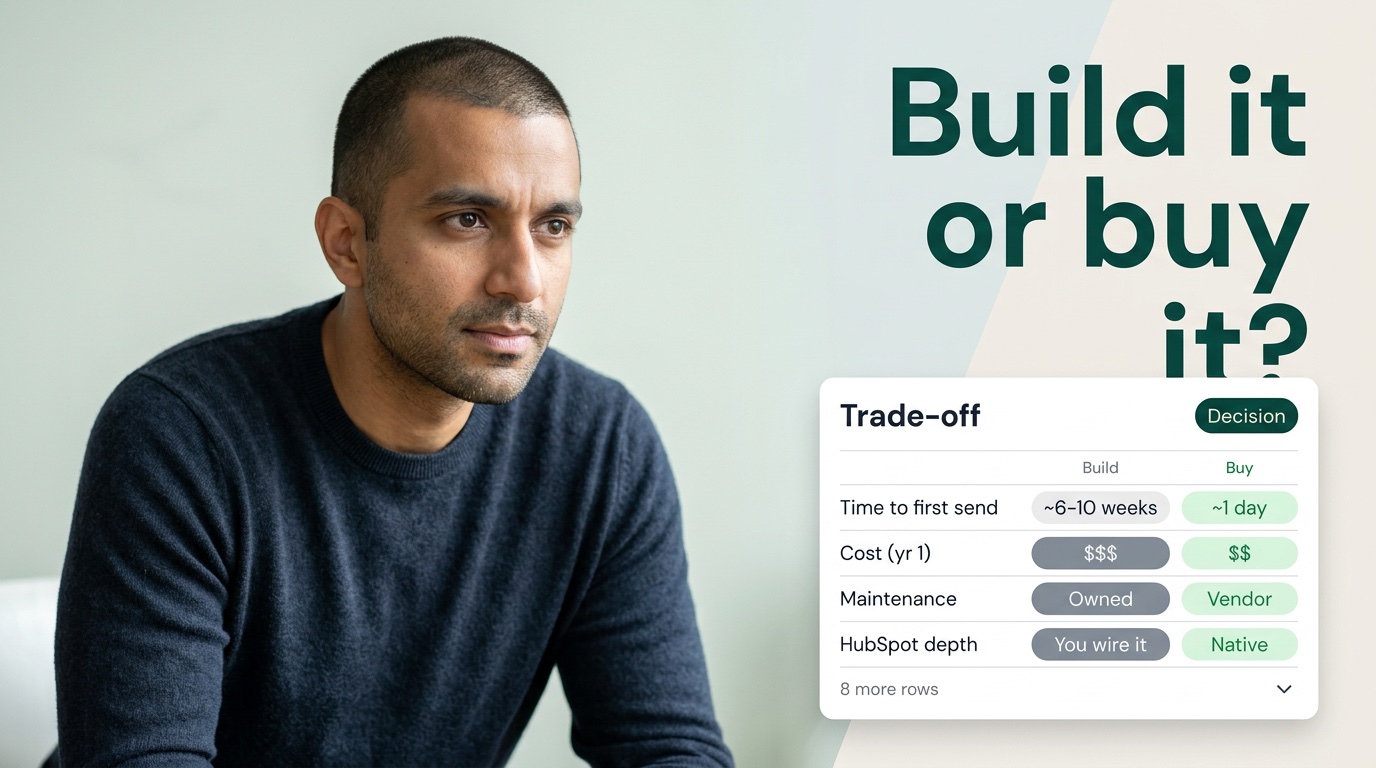

HubSpot depth beyond the marketing slide

Every vendor says they integrate with HubSpot. I translate that claim into observable behaviour: properties that change when a document moves through its lifecycle, workflows that fire on those changes, and lists that managers can use in pipeline review without exporting to a spreadsheet.

Shallow integrations often stop at activity logging or a file attachment. That can be fine for lightweight use cases, but it breaks down when you try to run revenue operations at scale.

Deep integrations let you treat documents as part of the same deal record, the same reporting layer, the same pipeline view. That's how you coach stalled agreements, forecast with less guesswork, and give customer success a clean handoff.

When I map use cases, I align document types to HubSpot realities. Quotes need tight line item behaviour, proposals need flexible sections and optional appendices without breaking brand, and contracts need signer roles and clause control that match how your entity actually signs. If a vendor shines on proposals but limps on contracts, know that before you commit.

I also ask how the tool behaves when HubSpot data is wrong. A good implementation surfaces missing required fields early. A brittle one lets reps send incomplete documents and then blames the CRM.

Line items deserve their own proof. I ask for a deal with tiered discounts, optional add-ons, and at least one custom property that affects price narrative. If the table breaks or needs manual edits, your quotes will leak trust before the customer reads the cover letter.

When teams sell multi-entity deals, I confirm how company and contact associations flow into signer blocks for contracts. A tool that assumes one bill-to and one signatory per deal will frustrate enterprise motions. Map those scenarios during evaluation, not after the purchase order clears.

How to run a pilot that survives contact with reality

Pilots fail when they're too polite. If you let reps keep their old workflow as a backup, you won't learn whether the new tool wins.

I recommend a bounded pilot with clear success metrics and a single workflow owner who can settle disputes between sales and legal.

Pick one segment or product line, not the entire company. Choose a timeline that covers at least a few full deal cycles. Measure time from ready-to-send, time from send to signature, and error rate on fields that used to be manual.

Add a qualitative weekly pulse from reps, not just managers. Managers often hear what people think they want to hear.

Include the people who can say no today. If legal always blocks certain language, bring them into the pilot templates early. If finance cares about margin floors, encode those rules in approvals rather than hoping discipline will appear on its own.

When the pilot ends, decide based on behaviour, not slides. Did reps use the system without shadow processes? Did HubSpot reporting improve? If yes, you've got something worth scaling. If no, fix the workflow or pick a different tool before you train two hundred people.

How I score vendors without a spreadsheet cult

Some teams turn evaluations into fifty-row matrices where every cell says "partially supported." I prefer fewer rows and sharper evidence.

For each requirement, I ask for a timestamped recording or a live session on our data. If a vendor pushes back on using our sandbox, I assume the demo is optimised for their sample tenant, not ours.

I also separate "available" from "adopted." A feature buried three menus deep might as well not exist for a busy rep. I watch how many clicks it takes to generate from a deal, how obvious rejection feedback is for legal, and whether managers can see stalled sends without exporting.

Finally, I talk to references in a similar HubSpot shape. Same object model complexity, same approximate deal volume, same mix of quotes and contracts. A reference from a ten-person team rarely predicts behaviour for a three-hundred-person org.

Contracts, approvals, and the questions legal actually asks

Legal rarely cares about your integration diagram. They care whether the right version went out, whether non-standard terms were flagged, and whether they can prove who approved what.

When I evaluate approval workflows, I bring a real redline story from the last quarter. If the tool can't reproduce that path without email chains, I note the gap explicitly.

For contracts specifically, I check signer order, counterparty roles, and what happens when a customer forwards the envelope to someone unexpected. I also ask how amendments and renewals inherit data from the original deal so CS isn't retyping terms six months later.

Finance often joins late in the process. I pull them in during the pilot, not after go-live. Discount authority and margin floors belong in rules, not tribal knowledge. Stress those exceptions in your evaluation, because that's where silent workarounds appear.

Where to go after the shortlist is clean

Once your checklist scores are honest, narrow to two options and run parallel builds on the same deal. That exercise is tedious and valuable. You'll feel mapping friction, approval friction, and reporting friction in a way no sales deck reproduces.

I work with Portant as a partner when teams want HubSpot to stay at the centre of their document lifecycle. I still recommend you run the same checklist on us as you would on anyone else. The goal is fit, not loyalty to a logo.

If you're early in the journey, pair this post with best document automation tools for HubSpot in 2026 for a market-level view. Keep HubSpot integration documentation open while you test events in a sandbox. And if approvals are your riskiest area, spend extra time in approval workflows until the path feels boring. Boring is good. Surprises at month three aren't.

After you sign the contract, the real evaluation starts

Buying isn't the finish line. The first thirty days determine whether reps trust the system.

I schedule a weekly fifteen-minute standup with ops, a template owner, and a frontline rep. We review failures, not successes. A missed mapping, a confusing approval state, or a signer error gets logged and fixed before it becomes folklore.

I also define what "done" means for enablement. Recorded training, office hours, and a short internal playbook beat a fifty-slide deck nobody opens. Buyers who skip change management get false negatives on perfectly capable software.

If you treat evaluation as a continuous loop, you'll keep improving templates and rules long after the procurement process ends. That mindset matters more than whichever logo you picked in week one.

Frequently asked questions

What belongs on a shortlist when I evaluate document automation?

I start with object fit in HubSpot, field and line item mapping, template ownership, approval paths, eSign lifecycle sync, auditability, and reporting without exports. If a vendor can't demo those on your data, I don't advance them.

How should I assess security and compliance without drowning in jargon?

Ask where data lives at rest and in transit, who can access templates and envelopes, how admin roles are separated, what logs exist for sends and signatures, and how the vendor handles subprocessors. Then map answers to your own security questionnaire instead of trusting a glossy one-pager.

What does deep HubSpot integration mean in real life?

Deep integration means generated, sent, viewed, and signed states write back to HubSpot properties or associated records so workflows, lists, and dashboards react without manual updates. Shallow integration often stops at a PDF attachment or a single activity note.

How do I run a pilot that predicts rollout success?

Pick one messy deal type, involve legal or finance if they block sends today, measure time to first send and time to signature, and require reps to use the tool for two weeks without a shadow process in email. If people quietly revert to old habits, that's your signal.

Who should be in the room for the final decision?

I want rev ops or sales ops for object design, a template owner from sales or solutions, legal or finance if approvals matter, and IT or security for access and data handling. Missing any one of those groups is how you buy software the org won't adopt.